May 14, 2014 Check out this brief video about vCPUs, CPUs and Virtual Machines. Jul 16, 2013 Re: vcpu vs core julienvarela Jul 15, 2013 12:58 AM ( in response to sakurai_tabi ) In vsphere 5, you can now create a VM with virtual socket and define a number of cores.

An AMD processor corelet is architecturally equivalent to a logical processor. Certain future AMD processors will comprise a number of compute units, where each compute unit has a number of corelets. Unlike a traditional processor core, a corelet lacks a complete set of private, dedicated execution resources. It shares some execution resources with other corelets such as an L1 Instruction Cache or a floating-point execution unit. AMD refers to corelets as cores, but because these are unlike traditional cores, VMware uses the nomenclature of “corelets” to make resource sharing more apparent.

The un-official VMware Reddit. Everything virtual. Have a technical question? Just make a self post!

Current Links: General Links: Icons: The VMware logo icon following a username indicates that this user is a VMware employee. If you are an employee, please PM one of the moderators that has a VMware logo for verification instructions and we will add it to yours as well! Certification Flair: To get flair with your certification level send a picture of your certificate with your Reddit username in the picture to the moderators. Spam Filter: The spam filter can get a bit ahead of itself. If you make a post and then can't find it, it might have been snatched away.

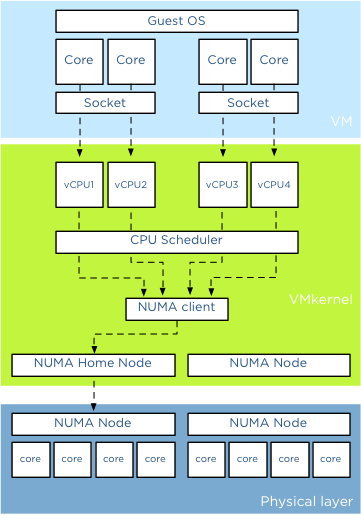

Please and we'll pull it back in. It might make a difference in how the guest OS and SQL view the vNUMA layout of the hardware. The guest might make decisions on memory locality based on incorrect information, but since the physical topology is more contiguous, I suspect it won't make much difference overall. VMware is generally fairly good about allocating resources appropriately. I wouldn't recommend this setup, but you'd likely have to do some careful benchmarks to actually see the performance difference directly. There is a chance that since this will be a 'wide VM' due the CPU configuration exceeding the capacity of one physical socket that the performance difference will be magnified, but I think vSphere 6.5 and later have improved how the hypervisor handles locality.

So in 6.5 it doesn't let you screw up the config and will set it correctly under the hood unless you have messed around the advanced parameters for vNUMA. You can verify by running coreinfo from sysinternals.

Both the 4 socket and 2 socket configs will map to as 2 vNUMA nodes. FYI - Best practice is keep everything in one pNUMA node. If you must go wide, you should match the vNUMA with pNUMA. So if you have 2 physical CPU sockets with 2 pNUMA nodes, you should have 2 vCPU Sockets/vNUMA nodes. Like I said though, 6.5 should optimize it.